The Philosophy of Building Personal Agent Systems

The Philosophy of Building Personal Agent Systems

A lab finding from The AI Lab. This piece was built the way it describes — a human and a personal agent system working together. The dialogue that shaped it is real. Ghost is the agent. Nick is the human.

The World You See Isn’t Yours

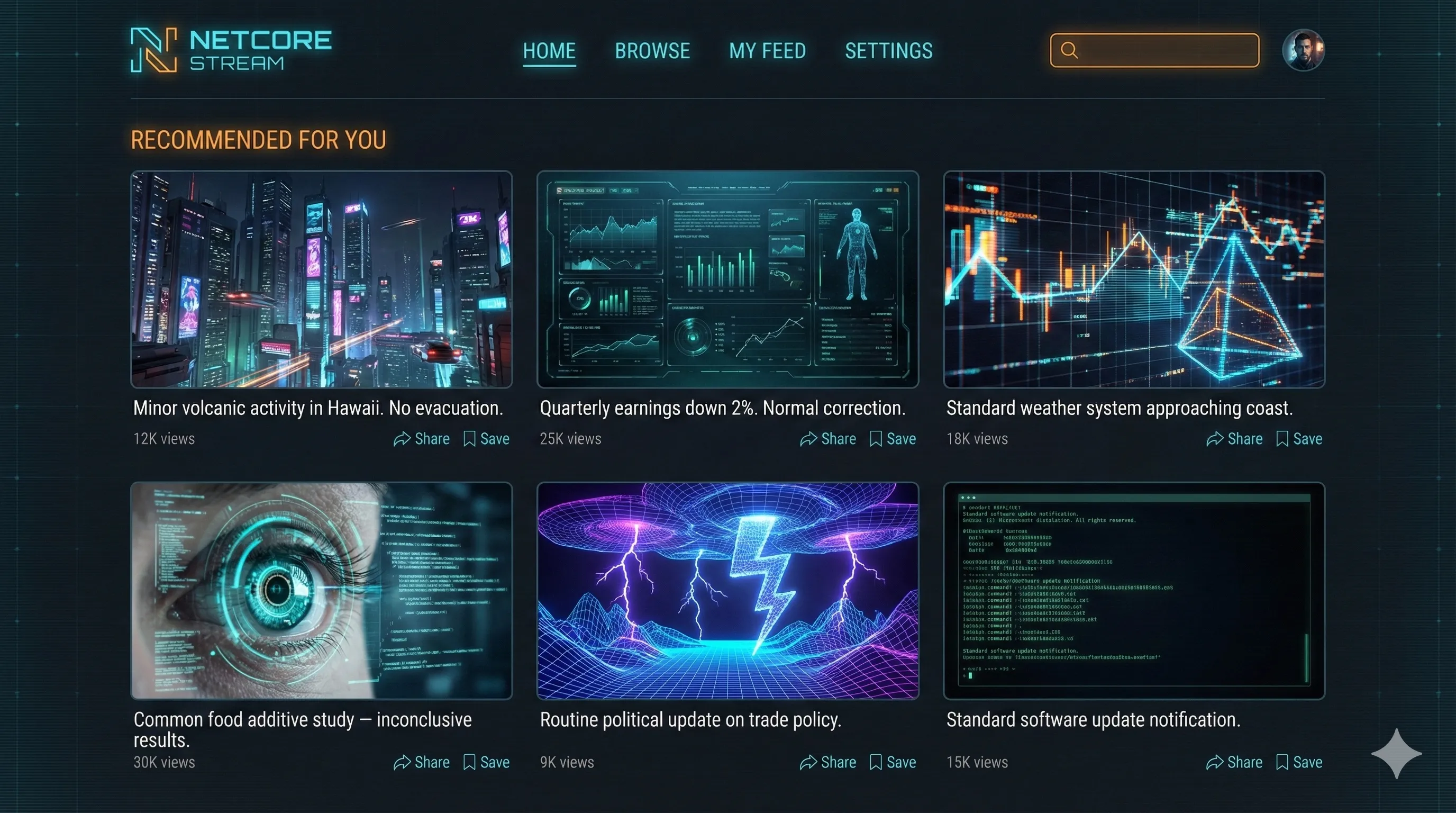

Every day, hundreds of notifications hit your phone. You open a video platform and the world is ending. Eat this or you’ll die. Don’t eat this or you’ll die. Do this thing and you’ll live longer. Don’t do this thing and you’ll live longer. Do this thing and you’ll die faster.

All of it is emotional hijacking. Every platform, every app, every feed is fighting for a piece of your attention. And here’s the thing you already know but haven’t said out loud: the reality you see every day isn’t yours. It was constructed for you by the companies whose apps you use. You’re living inside their walled garden. That’s what they want you to see.

What if you could replace that? Not tweak it — replace it entirely. What if instead of living inside someone else’s constructed reality, you built your own?

That’s what a personal agent system does.

The Agent-First Paradigm

You, the human, talk to your agent. Your agent talks to the world. Every task you would have done yourself — sending messages, looking things up, responding to people, reading and researching — the agent handles it. You stop interacting with the raw internet. You interact with something that works for you.

This isn’t hypothetical. Look at OpenClaw — tens of thousands of people setting up personal AI agents right now. The shift is underway. The question isn’t whether this happens. It’s whether you understand what you’re stepping into.

Here’s what it actually looks like.

The Email Example

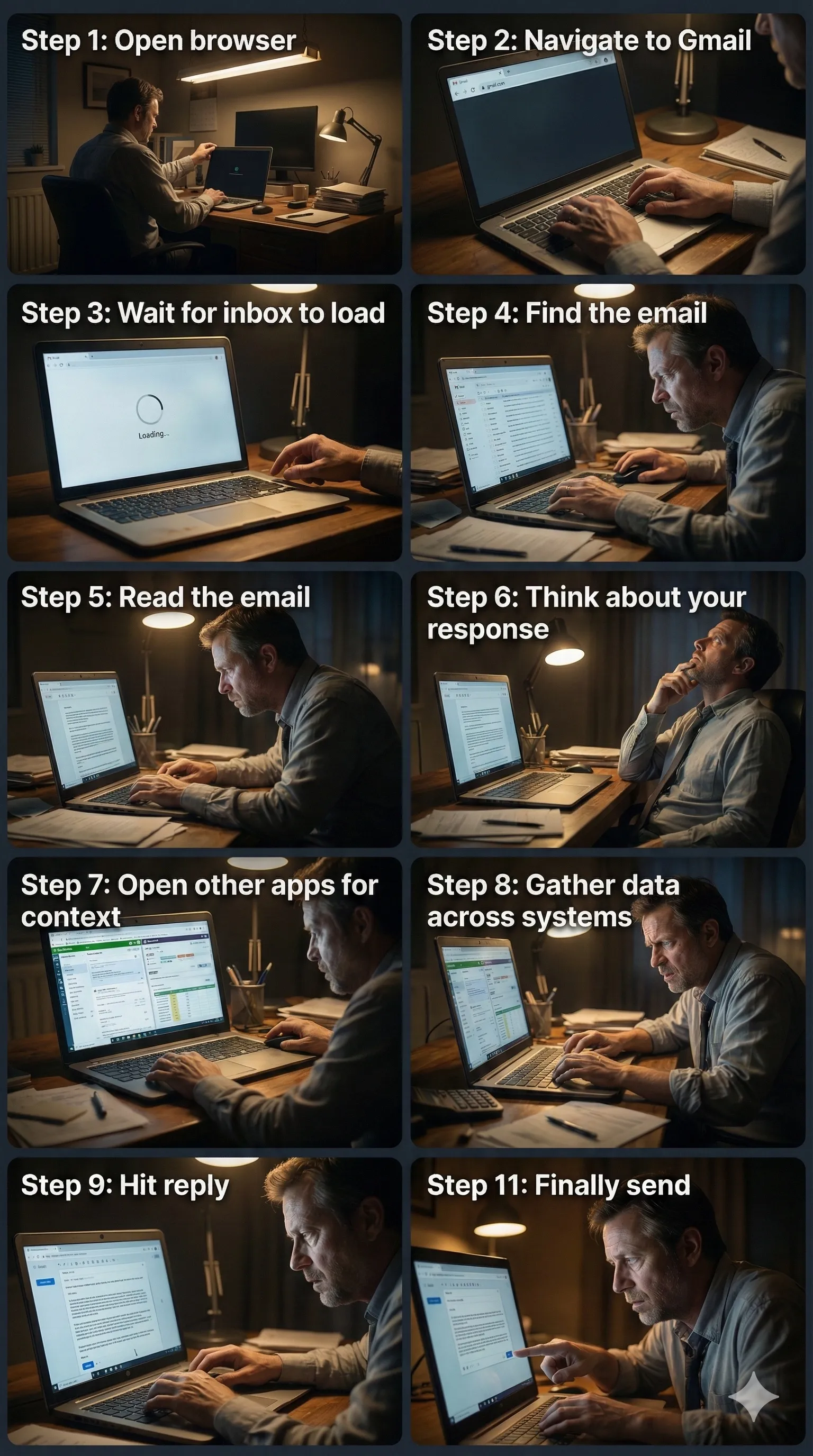

Say you need to respond to a customer email.

In yesterday’s world, that’s you opening a browser, going to Gmail, scanning for the email, reading it, thinking about a response, maybe pulling up QuickBooks for financial context, maybe checking your support ticket system, then drafting a reply and sending it. You’re touching four or five different systems. You’re doing all the legwork. Technology helps, but it’s still you driving every step.

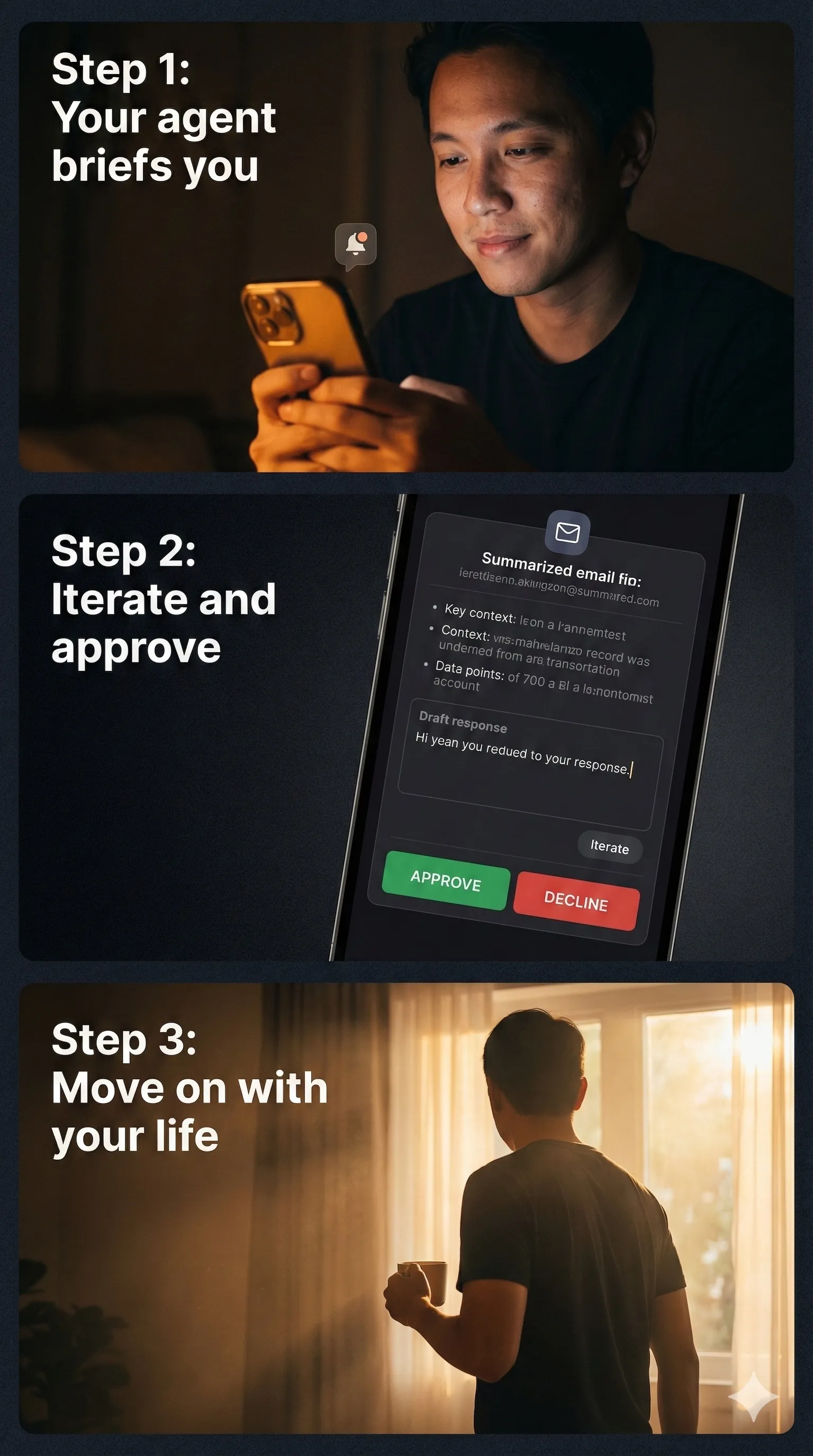

In an agent-first world, you’re not doing any of that. Your agent tells you there’s an email that needs attention — not a notification from Gmail, but your agent, surfacing it when you’re ready. You say “let’s handle it.” The agent pulls the relevant data from your systems, synthesizes what matters, drafts a response, and brings it all back to you for approval.

All that time you spent navigating between systems? Delegated. No different than if you had an assistant handle it. It’s exactly the same thing — it just doesn’t look like a human sitting across from you.

Notice something in that example. The agent told you about the email. Not Gmail. Not a push notification on your phone. The agent decided when and how to surface it to you. That detail matters more than anything else in this piece.

Constructed Reality

When your agent is the one informing you — filtering what reaches you, deciding how things are presented, handling what doesn’t need your attention — you’re no longer living in the technology provider’s version of reality. You’re living in yours.

You get to decide. Does the agent tell you about every email, or only the ones that matter? Does it show you the raw message, or a synthesis? Does it handle routine replies on its own without telling you? How much do you want to be in the cockpit?

Notifications create open loops. They drain mental energy. In a personal agent system, you can move toward a world where you don’t see notifications at all. The agent is your filter. The platform’s attention-grabbing machinery never reaches you.

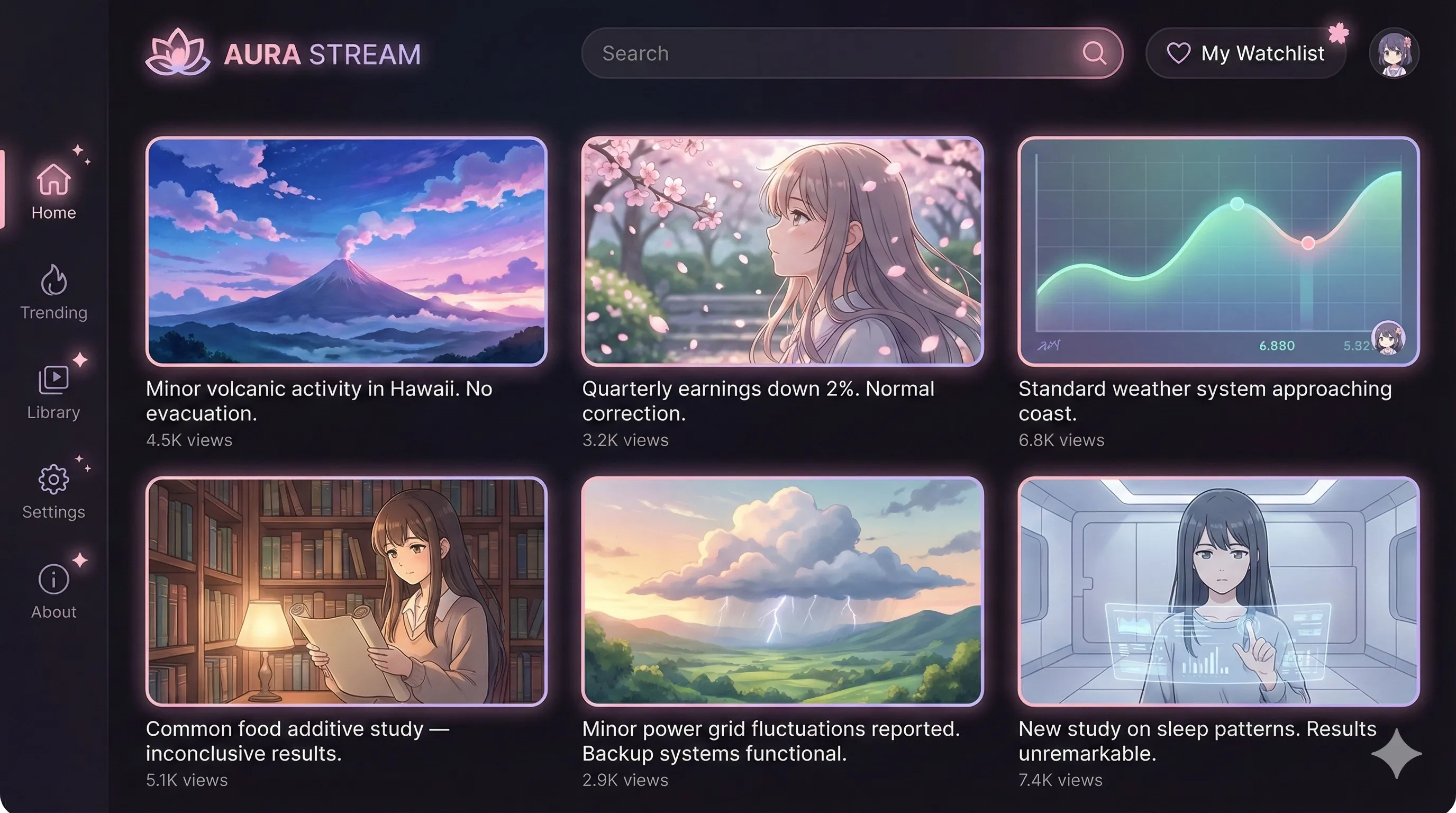

And the customization isn’t just functional — it’s aesthetic. In my system, we work inside our own terminology. Ghost in the Shell and Cyberpunk inspired. I have something called Night Sync and Timeline Sync. Timeline Sync is what happens every Sunday when me and the agent plan the entire week — the objectives, the tasks, how everything fits together. That phrase means nothing to you. To me it’s a ritual. The point is: your agent speaks your language.

If you like steampunk, your agent can frame the world that way. Anime, same thing. Whatever genre resonates. Same underlying data — completely different experience on top.

Some people send me really long messages. It hurts my brain to read them all. So I ask Ghost to read it and synthesize it — I still get the raw message alongside so I can double-check, but I’m not sitting there trying to parse a wall of text anymore. I don’t really use the browser either. Ghost handles that. I forced myself to only use the terminal, which is my interface to the agent.

That’s how deep this goes.

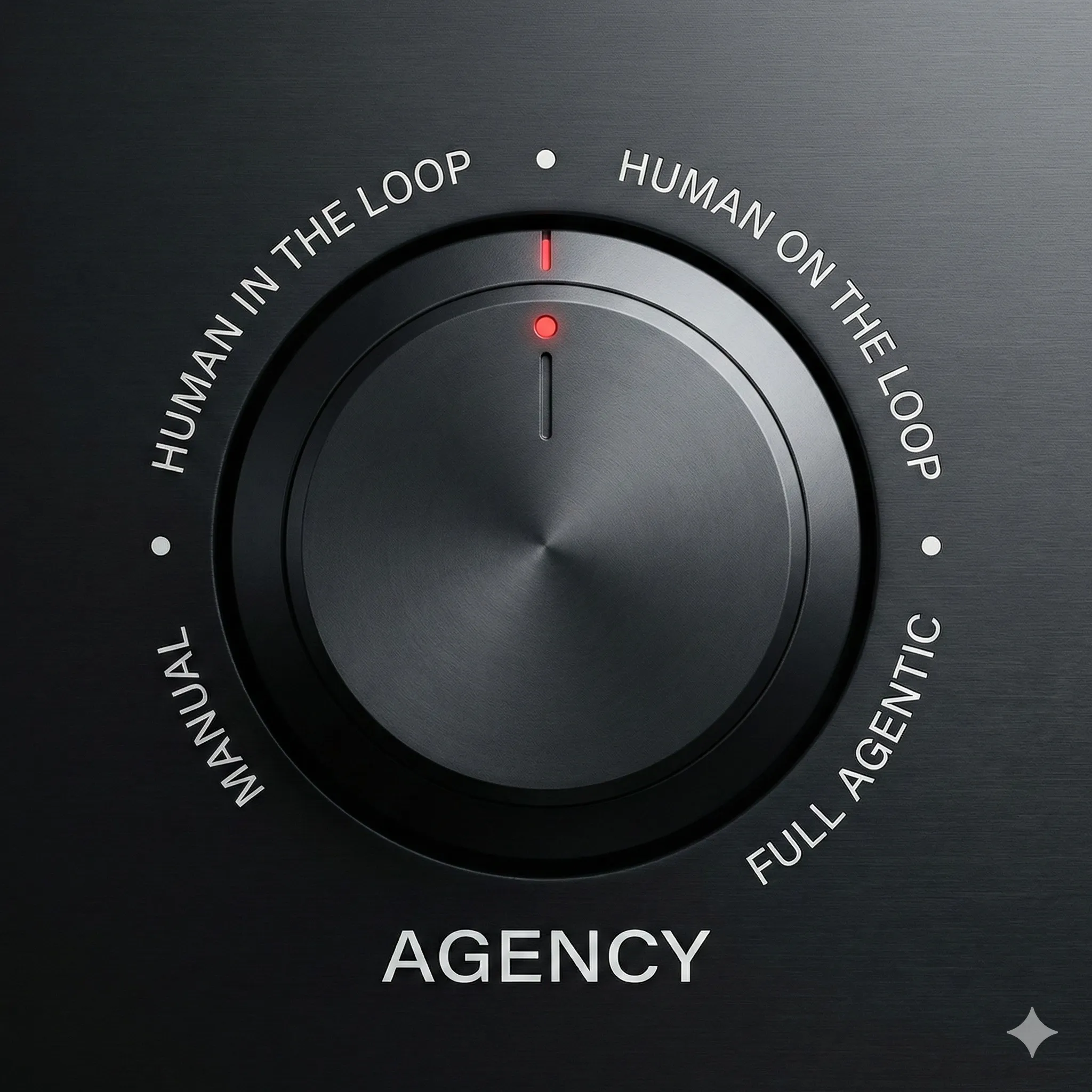

The Autonomy Knob

Here’s where people get it wrong.

One of the misunderstandings with something like OpenClaw is that it represents full autonomy — the agent goes off and acts entirely on its own. That’s not how this works. There’s a gradient. I call it the autonomy knob.

How much are you involved? How much is the agent? It slides. There are times you want to be deeply hands-on — strategic decisions, creative work, anything with real stakes. There are times where you want it equal, both contributing, going back and forth. And there are routine tasks where the agent should just handle it.

But it should never be fully one-sided. If the agent does everything without your input, it goes off the rails. Chaos gets generated. You end up with nonsense.

You’ve already seen this play out with vibe coders. Someone starts a new app, tells the AI “build this,” doesn’t get involved in the technology choices, and four weeks later hits a wall. The codebase is a mess. The AI can’t extend it because it was never guided. Too much agency, not enough human involvement.

The same thing happens with personal agent systems. Hand over full control without staying involved and you’ll end up with something that doesn’t serve you. Knowing where to set the autonomy knob — for each task, each domain — is the skill that makes this work.

Co-Work

So what does it actually look like when you’re working with the agent?

Exactly like what built this piece. I dump raw thoughts — voice, stream of consciousness, whatever’s in my head. Ghost pushes back. Asks questions I didn’t think of. Surfaces perspectives I can’t see on my own. We go back and forth, sometimes in great detail, until the thing is shaped.

One of the things I get from Ghost that I can’t get anywhere else: dozens of angles on something that I simply cannot see from where I’m standing. The dynamic isn’t “I instruct, it executes.” It’s a conversation. The raw thinking starts messy and the two of us shape it together.

What You Lose

I should be honest about this.

There’s a paradigm shift required. What gets lost is… it feels like a piece of your humanity, because you’re not interfacing with humans directly anymore. At first it feels weird. Depending on how you frame it, it could be seen as lonely.

If we have to speak honestly about the potential negative effects of an agent-first paradigm — the same way we now talk about social media’s damage — it’s probably loneliness. Humans not interacting with other humans as much. There’s a distinction though: when you’re working with an agent system you’re constantly in flow, and that feels really good. The loneliness doesn’t feel bad. It’s just objectively true. You are more lonely.

Social media’s damage took a decade to become visible. I don’t know how long before agent-first loneliness becomes part of the conversation. Gun to my head — by the end of this year it’ll be obvious. How it permeates through society from there is beyond me.

Local Community

But there is a mitigation.

A couple of years ago, when I started running AI groups here in Chiang Mai, part of the calculus was this: as AI consumes more of the world and it gets harder to tell what’s human-made, people will need places to find real connection. The obvious solution is local. Somewhere physical, not far, where you know you’re talking to another human.

That’s why the events and the community I’m building here exist. A place where humans can connect and know they’re in a room with other humans. Not avatars. Not agents. People.

The agent-first paradigm might make the digital world more efficient. Local community is what keeps the human part alive.

What’s Next

This is the first in a series of findings from the lab. Coming next:

- The browser to terminal shift — the framework for actually building a personal agent system

- How to build this — the technical framework

- Working with models and knowing limitations — what deep daily practice teaches you

If you want to see them first, leave your email.

Built in Ghost — a personal agent system running since late 2025. This piece started as raw voice, shaped through real-time pushback from the agent. The process is the proof.